Google is currently in the middle of rolling out the largest change to the way search works since RankBrain was released almost five years ago.

Bidirectional Encoder Representations from Transformers (or BERT for short) is nicely explained here in Google’s announcement post, so I won’t regurgitate the information that is already out there. But to summarise, this is Google’s next step to upgrade conversational search by implementing a new model, called a transformer, in search.

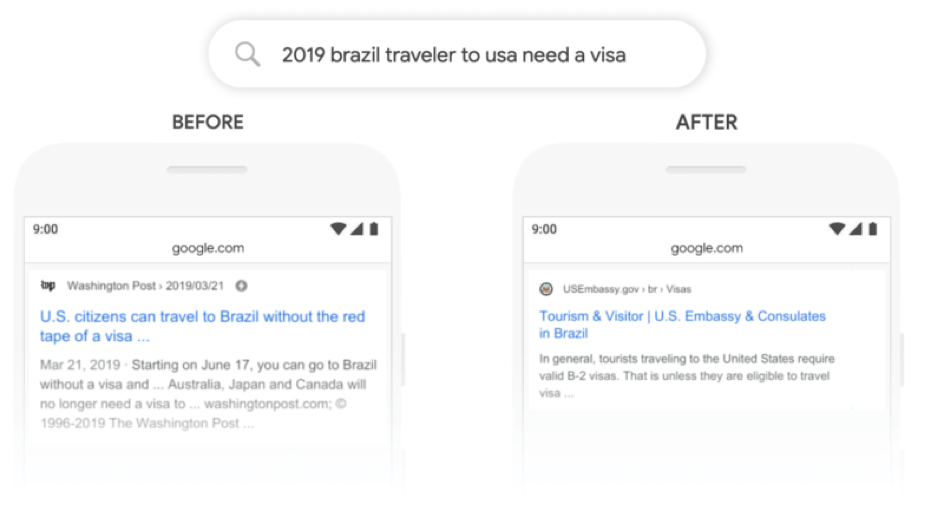

Transformers within the BERT algorithm allow Google to better understand the nuances of the way a question is asked by considering the entire sentence and how the words relate to one another.

I’d recommend looking at some specific examples within the Google blog post for more details.

It’s also worth noting here that all of the examples given are long-tail informational queries. So the 10% impact we’re talking about here isn’t as likely to impact shorter or commercial queries, simply due to their being fewer words for the BERT model to work its magic on.

Hummingbird, RankBrain, now BERT

The best way to view BERT is just a continuation of Google’s efforts to improve conversational search that started all the way back in 2012 with the release of Hummingbird.

With Hummingbird, Google started to better understand the meaning of individual words within a query by improving their understanding of entities with the knowledge graph.

When RankBrain was released they started to better understand longer and more complex queries and process negatives better.

Now BERT has been released, Google is now starting to understand not just individual words and their meaning, but what the intent of the query is when a combination of words is used in a specific order.

So, how do I optimise for BERT?

In reality, nothing should change for how content creators and SEO’s perform their day-to-day work.

If you’ve been paying attention to how conversational search has been developing, creating content that answers user questions in a succinct and factually correct manner shouldn’t be anything new to you.

This is exactly what you need to be doing to take advantage of this. People who see uplifts are simply doing a better job of answering whatever that question is.

I’ve dropped from the BERT update, what do I do?!

It’s worth understanding with this update that if you have lost traffic, you’ve dropped because you shouldn’t have ever really ranked well for that query in the first place.

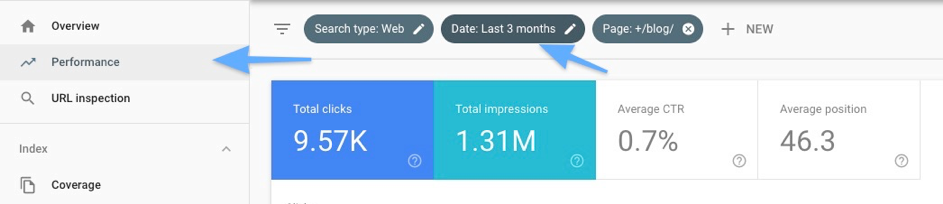

The best way to figure out why you’ve dropped from this update is by using Google Search Console and looking at each query that you have dropped for. You should have only dropped for a query if BERT has decided that you haven’t answered what the user is actually asking.

It’s still very early to investigate a potential drop, but here is an example of what the process would look like.

First, head towards the performance report in GSC and adjust the date filter:

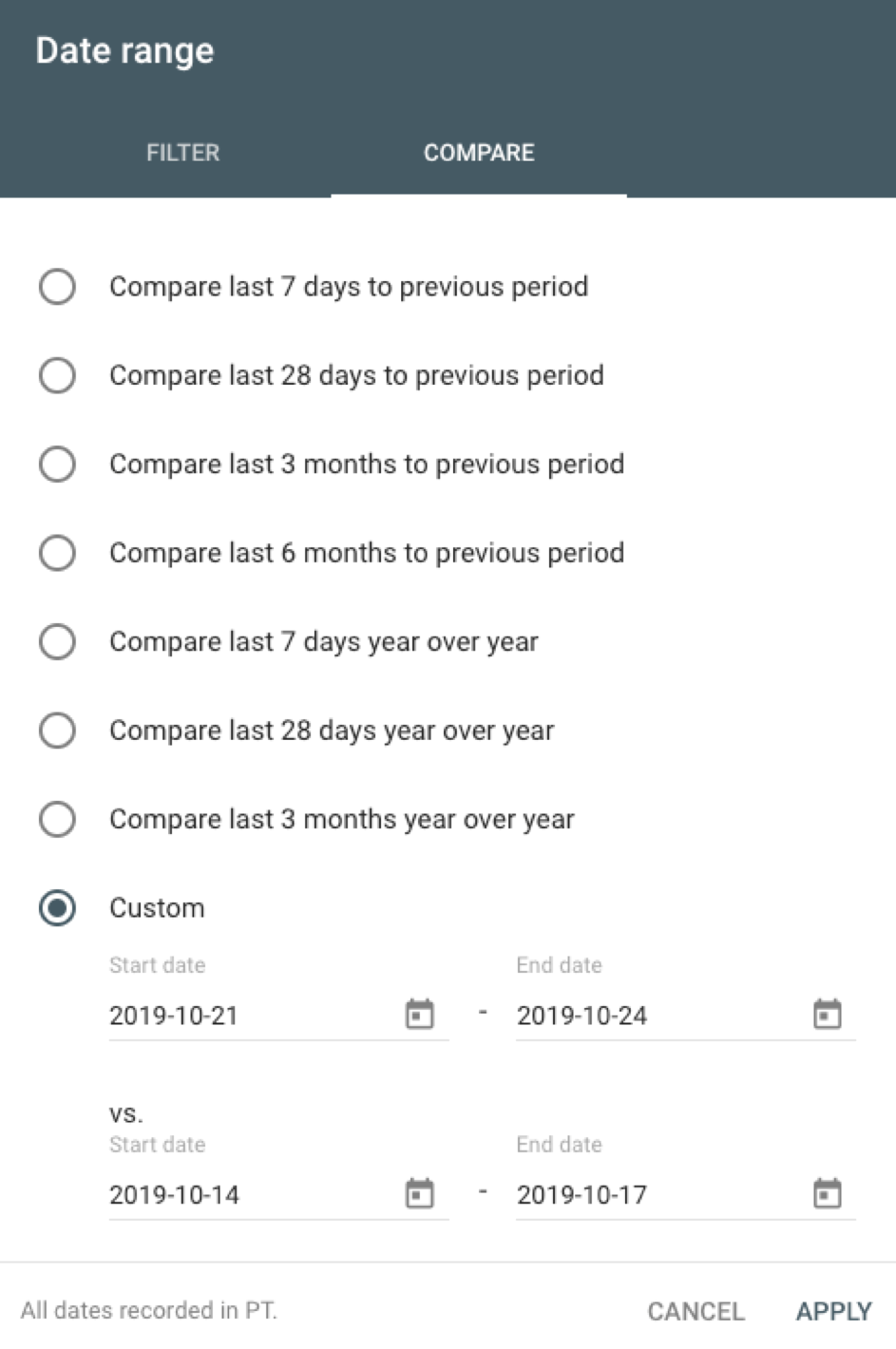

The algorithm has been rolling out this week, so we’ll compare Monday - Thursday this week, versus last week:

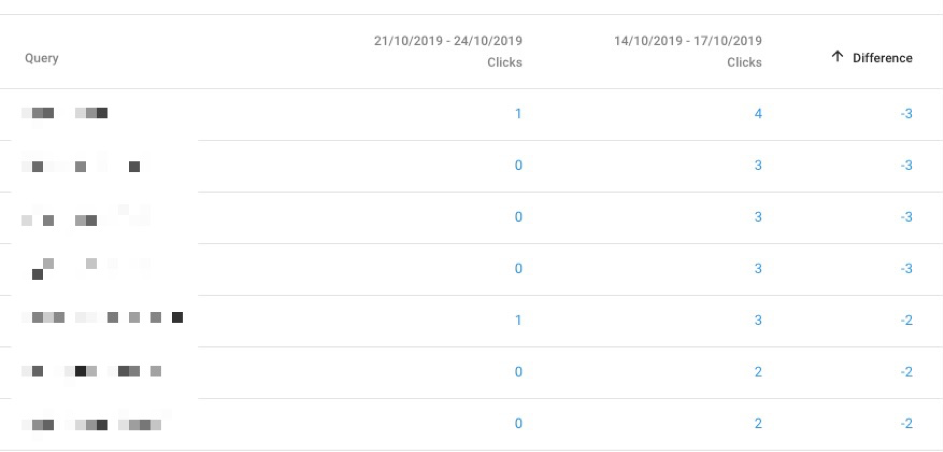

Next, take a look at the queries that have had any significant drops by filtering by difference. You’ll want to try and spot longer-tail informational queries:

Once you’ve highlighted which queries have dropped, click on them and move to the page ‘Pages’ report to see the ranking page.

Next step is to improve that page so it better answers the users query.

Consider all the normal on-page SEO tips like having the question within a header tag and keeping the answer nice and succinct immediately under the header. If you’d like to expand on your answer more, do that after you’ve succinctly answered the question.

Writing this way makes it much easier for the users to find the answer they are looking for when scanning your content.

At the same time, consider optimising the snippet and answering the question in a machine-readable way. For example, phrase your answer in a way that has a clear subject, predicate and object.

Final thoughts

BERT is just another step towards Google’s goal of understanding language and intent and it shouldn’t come as a surprise - especially considering new models like BERT are key for Google, due to needing to achieve higher word accuracy for voice search to avoid user frustration.

It will be interesting to see some real-world impacts of this algorithm update, so if you have any, feel free to comment below or tweet me at @SamUnderwoodUK.

Sign up for our monthly newsletter and follow us on social media for the latest news.

Proudly part of IPG Mediabrands

Proudly part of IPG Mediabrands