Technical SEO has fallen out of fashion somewhat in the past few years. Content marketing is more accessible, easier to implement and easier to get everyone’s buy in. Everyone feels they know content, whereas very few people within a business will have a concrete opinion on technical issues and recommendations.

Choosing to ignore technical recommendations will lead to diminished returns from your content marketing efforts. This is why we always focus heavily on technical SEO with every client who needs it, and focus wholly on resolving critical issues before moving onto the creative work.

There is no point creating an interactive piece if the site is filled with low value spun content, or has an index bloat issue preventing the indexation of new content.

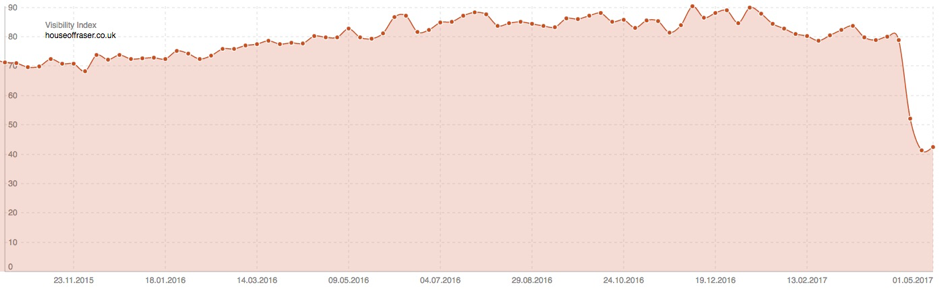

Department store group House of Fraser are finding this out the hard way right now. On April 4 2017, House of Fraser moved from http to https - according to Sistrix, since then they have lost 50% of their UK organic visibility in a two week period:

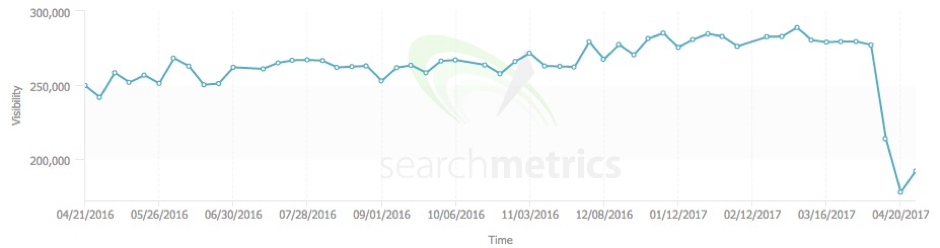

Search Metrics is also showing a similarly spectacular drop:

This post will take a dive into the mistakes House of Fraser have made, provide recommendations for what they should have done prior to the launch - and what they can do now to claw back some of that lost visibility.

Broken Backlinks

The first step when migrating any site is to consolidate link equity to the new URLs through redirects. In spite of what Google says, these need to be 301 redirected in a single step to prevent any unnecessary loss (as House of Fraser have found out).

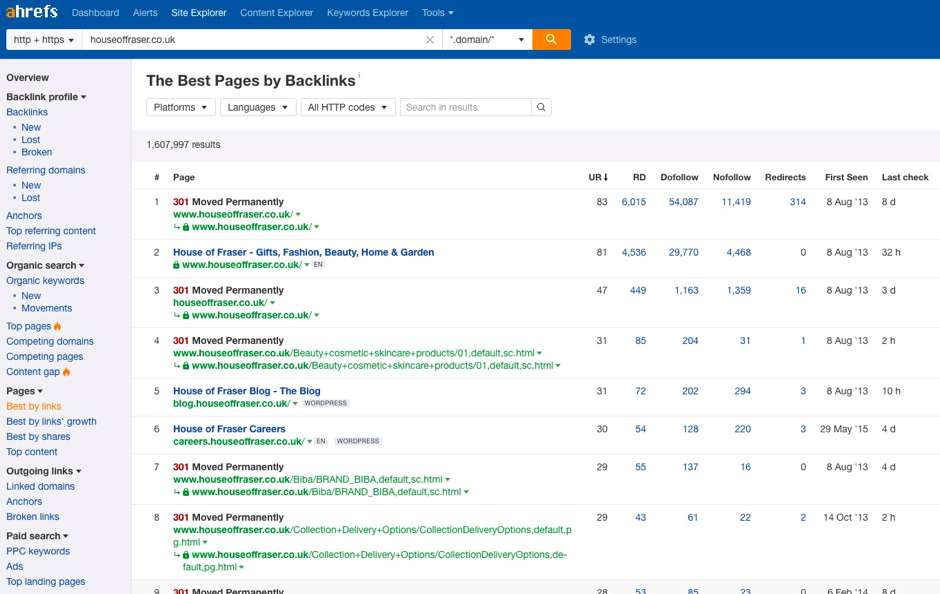

Using Ahref’s best pages by backlinks report we can see every page House of Fraser has with external links pointing to them:

Exporting these pages and running them through a Screaming Frog crawl to follow the pages to their destination uncovers one major issue with this migration.

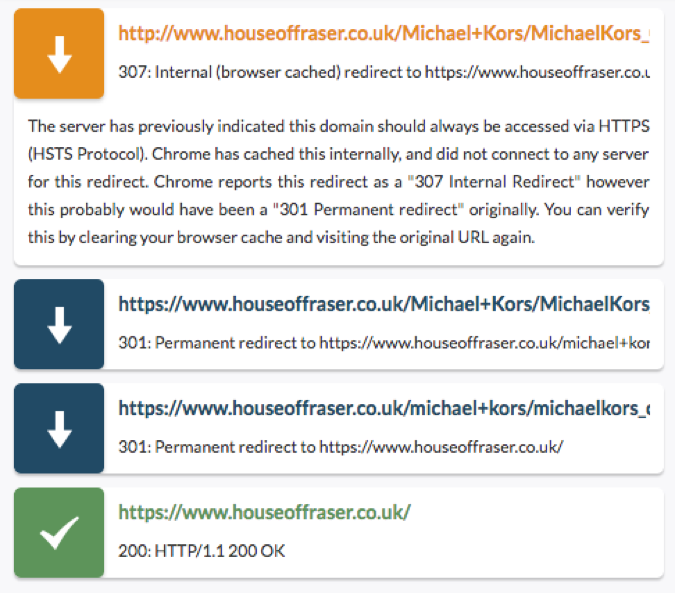

75% of the top 1,000 pages go through redirect chains like this one for example (which has links from 85 referring domains):

We can see from the above chain that House of Fraser have migrated their URL structure at the same time which requires additional investigation.

Redirect Chains

They have gone from the following structure:

https://www.houseoffraser.co.uk/Dining+Room+Furniture/5042,default,sc.html

to:

https://www.houseoffraser.co.uk/home-and-furniture/dining-room-furniture/05.5042.0.0.0.0.pl

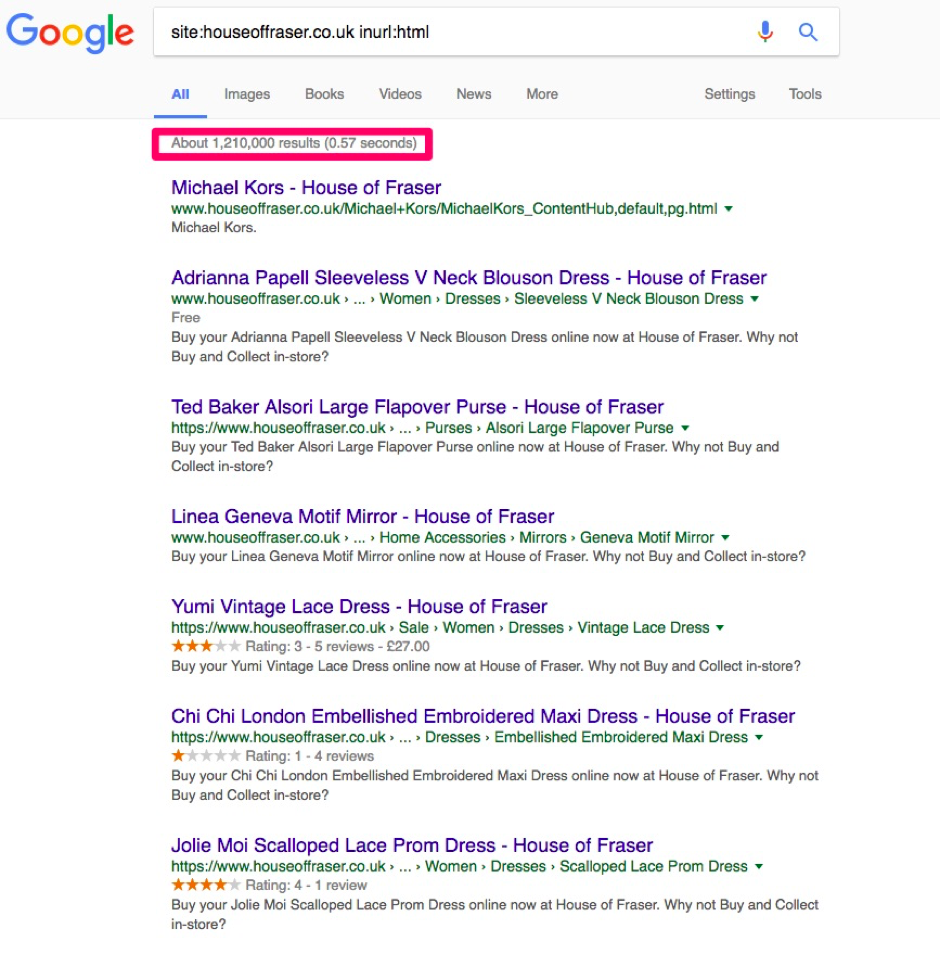

If we use advanced search moderators to search for URLs using the old structure we find lots more issues here:

The indexation status returns 1.2 million old URLs still indexed.

It will take Google time to crawl all the old URLs, follow the redirects then drop the old pages from the index so this number will likely fall. However, if we follow a few of the links we see that best practice has not been followed.

This pattern looks very much the same as the redirects implemented on the top pages; a 307 redirect to force https which is followed by an additional redirect to force the URL into lower case, before resolving to the destination URL.

An additional problem here is that it resolves to the homepage, which will be treated as a soft-404 by Google and the redirect will not be followed, so this page will remain indexed.

Nevertheless, following all the old .html page we encountered the redirect which forces URLs into lower case before redirecting to the destination URL in every case.

URL Restructure

Previously the House of Fraser website used functioning parameters to handle much of its content. For example, the men’s biker jackets page was served on the following URL:

With the migration, House of Fraser attempted to amend this to a cleaner URL structure which is recommended. Handling important content through functioning parameters is not recommended as it is rarely internally linked to correctly, nor is it as sharable. House of Fraser took the decision to try to move these URLs across to a static URL, but this has not worked correctly in every instance.

Following the above URL takes us (after the anticipated chains) to the following URL:

https://www.houseoffraser.co.uk/men/coats-and-jackets/02.203.0.0.0.0.pl

This is the general men's coats & jackets page not the biker jackets page. The keyword Mens Biker Jackets has 8100 searches per month and House of Fraser rankings have gone from P.4 to P.56, where it is now ranking the following page:

https://www.houseoffraser.co.uk/men/coats-and-jackets/superdry/02.203.0.0034775.0.0.pl

And not the preferred:

https://www.houseoffraser.co.uk/men/coats-and-jackets/biker-jacket/02.203.0025601.0.0.0.pl

What’s worse is some of the redirects are just plain wrong.

House of Fraser were ranking P.3 for mens hoodies on the following URL:

http://www.houseoffraser.co.uk/Men's+Hoodies/

This now redirects to a 404 page:

https://www.houseoffraser.co.uk/men's+hoodies

Instead of the new page:

https://www.houseoffraser.co.uk/men/mens-hoodies/02.2072.0.0.0.0.pl

This has caused ranking for the term to completely drop out of the rankings altogether.

Blocking Key Pages

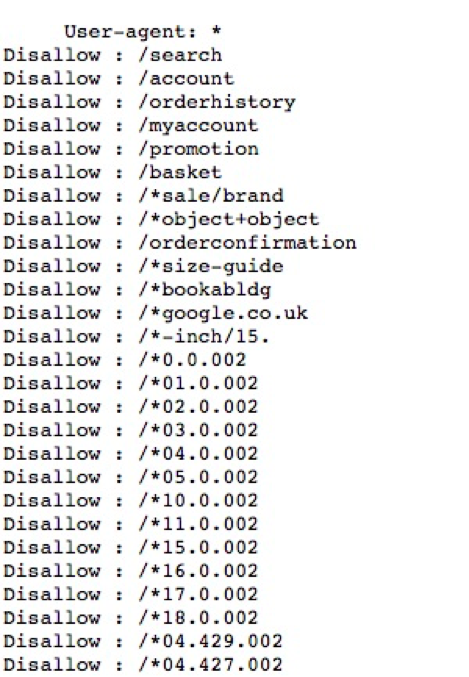

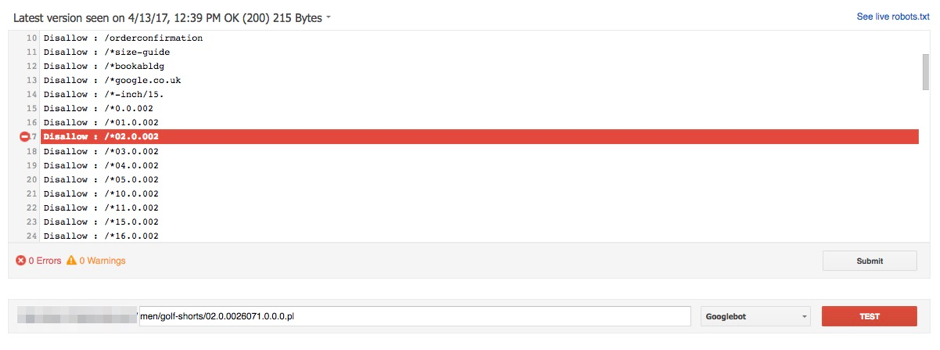

The URL structure now appends URLs with a string of digits. It's difficult to understand what is going on here, but what we do know is that they have erroneously blocked key pages with their robots.txt.

https://www.houseoffraser.co.uk/robots.txt

Using the robots.txt tester in search console I copied over the entire robots.txt and tested a few URLs to see if they were being blocked. For example:

https://www.houseoffraser.co.uk/men/golf-shorts/02.0.0026071.0.0.0.pl

The presence of a wildcard (*) is causing some URL strings to be blocked where they should not have been (we’re assuming at least).

What House of Fraser Should Have Done

A combination of a migration to https and a change in URL structure has led to one of the largest visibility drops by a major brand - ever.

The simple fact of the matter is that House of Fraser bit off more than they could chew. Migrating to https is a big enough job without having to worry about URL structures changing and the associated redirects here.

Here is what House of Fraser should have done, or can still do, to turn around their dramatic drop.

Fix Broken Backlinks

While Google have explicitly stated that there is nothing in the algorithm that causes a loss of link equity between redirects, this is a classic sign of their white truths, something that is factually true but empirically false. We know redirect chains cause a loss of rankings through observation.

There might not be something in the algorithm that says a redirect will lose 'X' amount of rankings. What is actually happening is redirect steps cause confusion, wherein not all of the ranking signals will be consolidated to the destination URL. Each additional unnecessary step presents the opportunity for some ranking signal to be misplaced. While signals A, B and C might all make it to the destination URL, signals D and E didn’t, so rankings decreased.

Any URLs with backlinks should resolve to an appropriate destination page within a single step to avoid this confusion.

To do this, begin by finding every link to your website. Use as many sources as you have available to you - Google Search Console, Ahrefs, Majestic, Moz, etc. Consolidate this data into one spreadsheet and remove duplicated.

Gather all the destination pages which have links pointing to them and run them through Screaming Frog. Make sure you select 'always follow redirects'.

This will give you a complete list of your pages, their current status code, and if they go through redirects.

Any pages currently redirecting will need to be amended to point to the new version of the website (either the https, or the new URL structure), and if the redirect has chains then each subsequent step will also need to be redirected to the new page.

Any page without redirects will need to be mapped to the most appropriate URL on the site.

Avoid homepage redirects as these will not be followed. Should the page not have an appropriate new page on the site to redirect it to then let this page 404 - it is natural to have some 404s so do not strain to find a connection where there isn’t one. If the dead page has lots of valuable links then it will be worth creating a page on the new site to redirect to. Even if it is a competition that has expired, just adjusting the messaging to state this will still secure vital link equity.

Redirect All URLs With Organic Landing Traffic

Failure to redirect pages with organic landing traffic will mean that traffic will not be transferred to the new website. These pages are indexed and ranking for terms that are sending you traffic, so failure to redirect pages with organic landing traffic will see a definite decline to your bottom line.

To get all your organic landing traffic data go into the report in Google Analytics and choose the last 12 months as your date range.

If the data samples (which it most probably will do) then extract it at the largest date range possible without sampling. Then combine this data over the full 12 months to get an accurate understanding.

Pull these URLs out and run them through Screaming Frog again to understand if there are redirects already in play, then map all the redirects as before.

Test Before Publishing

This step is self-explanatory, but often overlooked. By this point you should have a master list of every URL on your site that either has backlinks, or has had some traffic to it within a year, and you will also have all your redirects.

Uploading these redirects to the development environment, then crawling every URL, will give you an understanding on whether your redirects have worked.

This step is only as good as the input. If you don’t have every URL to begin with, then you’re not going to see if the redirects have worked.

Keep a Sitemap of The Old URLs Live For Six Months

Google recommend that you should keep a sitemap with all your legacy URLs live after a migration of URL such as this. This is to make it easier for Google to find and crawl all the old pages, thus aiding the passing of ranking signals to the new URL. Without this step, it will take much longer for Google to follow all the legacy redirects.

There could be a number of explanations as to why this level of mismanagement was allowed to happen; lazy developers, inept SEO teams or agencies, or just a desire by upper management to push this through not knowing the full extent of the risks.

It does appear as if some level of recovery is taking place, as evidenced by the slight rise reported in SearchMetrics & Sistrix. This will be because Google is beginning to consolidate ranking signals to their correct pages.

Only time will tell if all the visibility comes back. Certainly it will not all return with the robots.txt issues, but this has been a very costly exercise. House of Fraser are now feeling the full force of overlooking technical SEO and in the space of a week have killed what has taken decades to build.

Sign up for our monthly newsletter and follow us on social media for the latest news.

Proudly part of IPG Mediabrands

Proudly part of IPG Mediabrands